Preparing for internet outages

Have any spares? This legacy PCI LAN card provides a 100 Mbit network interface jack for wired networks, making the card a great target for damage from induced voltage spikes on the Ethernet cable caused by lightning or arc flashes. Go for a fiber-optic connection from your ISP to help protect your in-house networks.

Photos courtesy of Wayne Labs

Seems intuitively obvious…But if you’re connecting important process data to the cloud/internet and expect continuous updating of vital data, having no backup plan for loss of internet could leave you floundering.

New food and beverage plants have “walkable” ceilings with drops for power conduits and network cabling, keeping both out of harm’s way on the plant floor.

Remember when the internet was spelled with a capital “I”—that is, the Internet? Today, we regard the internet as just another utility: electricity, city gas, water, etc. But like any utility, it can go down unexpectedly—whether due to internal or external circumstances. Internal, plant-related issues are under your control and should be mostly preventable, but unfortunately external interruptions can happen for most any reason (e.g., storms, politics, hackers, excess traffic, technical issues, etc.), and you should be prepared for these outages. With a little due diligence and careful planning, you can minimize the deleterious effects of internet outages to your business.

External outages beyond your control

Over the last decade or two, the internet has generally not only improved in speed and performance, but also has become more reliable as connections evolved from dial-up modems to cable, satellite and fiber optic. But it’s still not perfect.

Internet connectivity is generally reliable in most areas of the world, but it is still far less dependable than electrical power from the grid, says Don Pham, IDEC Corp. senior product marketing manager. Industrial customers taking advantage of the internet face the same challenges as commercial and even personal users.

Related Articles

There are a number of external factors that can lead to an outage, says Chad Clemens, controls engineer at CHL Systems. Obvious causes, for example, would be auto accidents taking out a pole that delivers service to your property or extreme weather events. An ISP (internet service provider) could have a major problem (e.g., a key router or gateway goes down) that interrupts service temporarily.

External failures are more often the result of a component failure, says Steve Pflantz, CRB associate/senior automation engineer. “Things get old, exposed to weather, and they fail. There is the old squirrel that gets in the box and chews up the wires or builds a nest and causes something to overheat or catch fire, but that dramatic cause is rare.”

Rare, maybe. But I’ve seen a squirrel fried when reaching between two legs of a 13,000 volt-plus, three-phase transmission line on a pole on our old street, tripping a breaker that shut down part of our town’s electricity until the electric company removed the carcass. Another rare occurrence I’ve seen was a squirrel’s gnawing away of an overhead service, copper-wire phone line’s insulation, corroding and opening the circuit of one conductor and making the line intermittently inoperable. In damp weather, the moisture in the air completed the circuit and the line worked normally; in extended dry weather, the circuit would open, and the line stopped working—quite the opposite cause of what the phone company technician expected.

Fortunately, says Pflantz, communications companies have seen all sorts of unimaginable problems and have learned from them, preventing weird things from happening that disrupt service. Buried fiber-optic cables now replace 1970s vintage underground copper lines where cracks and ingress from tree roots and water disrupt communications. “Those are the extremes, but I encourage people to find out what they have coming in so they can assess the condition and potential reliability before they rely on that communications link,” adds Pflantz.

Internal issues: your responsibility

Inside the walls of the facility, internet connectivity is your responsibility. With the exception of minimizing lightning damage, most internal internet failures can be prevented through good design principles and employee education. Clemens notes that accidents (e.g., those caused by forklifts) and localized fires can disrupt and destroy communications wiring. There could be server failures or accidental removal of a key cable, which could create lengthened and partial outages.

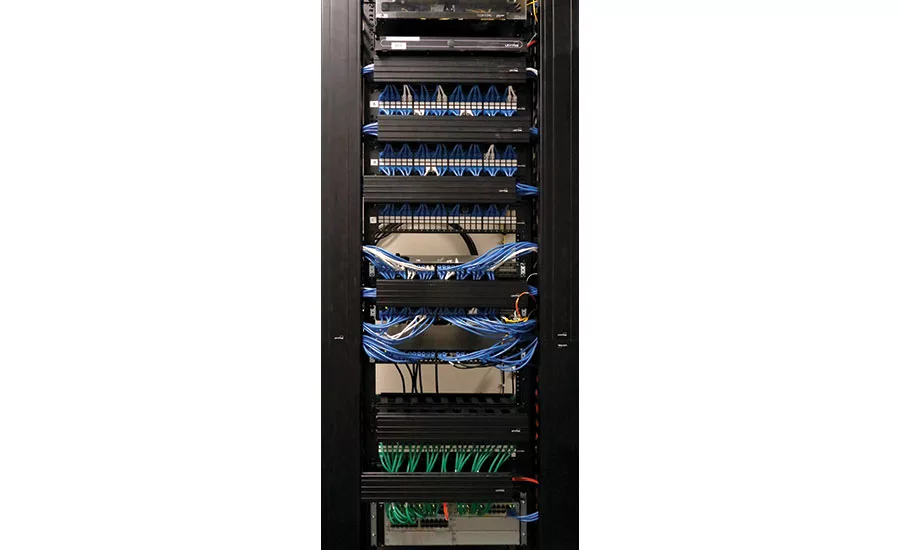

In chemical plants, distributed control system “home run” cabling was always put in conduits and up and away from people and equipment. Today most new food and beverage plants have essential cabling and plumbing in mezzanine areas with drops to the production area. However, with existing small and medium facilities where LAN wiring and equipment placement is often an afterthought, there are bound to be problems associated with facility growth.

Pflantz offers some common sense advice. Put network equipment in a place where it is physically protected from damage and in an environment that it was designed to be in. If you are going to rely on it for critical information and function of your facility, protect it and take care of it. Don’t put your modem or router on top of a file cabinet in the file room; give it a real home—an equipment cabinet. Install equipment and cables safely out of harm’s way. Secure cables so they do not fall on the floor or cause someone accidentally to trip over one and yank it loose. “This sounds silly, but I see these things more than one would think,” says Pflantz.

Other outage causes are not so obvious. “From our focus on operations at the edge of the network, we primarily see internal causes of failure: cybersecurity vulnerabilities and instability in network infrastructure,” says Josh Eastburn, Opto 22 director of technical marketing. These can result from complex architectures and heterogeneous devices that are difficult to manage and a large installed base of legacy hardware that is not security oriented. This is further complicated by the sometimes confusing relationship between IT and OT groups, which can leave control system networks without appropriate maintenance or redundancy, says Eastburn.

Another source of internal internet failures is user error, says Joseph Clussman, Stellar process controls engineer. Firewall and router rules can get deleted, or new rules may be added that block protocols or devices. Sometimes equipment may be switched off or rebooted without consideration of the consequences, and IP addresses can be duplicated. Well-meaning, security-minded people may sometimes even unplug cables that they thought were not in use and trigger a failure. Wireless routers can also get jammed by nearby offices or their range reduced by personal Wi-Fi hotspots, says Clussman.

Lightning: An external and internal threat

Lightning straddles external and internal causes of internet outages. Though unlikely, it’s a very real source of failures, says Michael McCollum, Stellar process engineer. “Even with a UPS and surge protector on incoming power, lightning can travel through telecom lines and blow up a lot of sensitive equipment, leading to a possibly catastrophic situation,” he says.

For example, at a prior editorial office where I worked, lightning took out the ISP’s router in a properly protected telecom equipment cabinet, then traveled via network cabling in this case, taking out the file server in the office equipment room, printer network card and network cards of three workstations. Getting back up and running took a few days. Can you afford to be down that long?

Even with professionally installed, properly grounded industrial sites and equipment, a lightning strike can seemingly defy the laws of physics, and as McCollum says, lead to a potentially grave outcome. In previous engineering positions, I’ve seen the results of lightning strikes: completely vaporized 12-ft. VHF radio transmitting antennas, exploded and demolished three-phase industrial breaker/service panels, and physical damage to walls, floors, equipment cabinets and melted conduits—and the list goes on. Lightning-induced currents can follow underground wiring between poles or towers and into buildings via utility service entrances. Even “protected buildings” are not necessarily safe from lightning.

Takeaway: Locate internet wiring and network cabling away from large electrical power circuits that can conduct high voltages and currents caused by lightning. If you can get it, use an ISP’s fiber-optic internet to eliminate another route for lightning-induced charges to enter your building.

Prepare for failures

Assuming your internet outage is external and due to an ISP problem, there are potential workarounds. “A big recommendation would be to have multiple ISP’s if that is feasible,” says CHL Systems’ Clemens. The ability to fail over to a second ISP can prevent long disruptions and make outages just minor blips. There are also pieces of equipment that can be installed on production lines, allowing them to maintain cloud communication despite loss of the internet.

CRB’s Pflantz suggests that an alternative ISP may be a solution, but another approach would be a second link from the same ISP, as there is a possibility that a second link isn’t served by the same ISP gateway, nor does it necessarily take the same routing. Processors should evaluate the cost versus the risk.

If a production line needs ongoing connection to the internet, there are a couple of solutions, says Chris Noble, Emerson Automation Solutions business development manager for factory automation. Use edge devices that do some data preprocessing and can store data locally until an internet connection is reestablished. (We’ll talk more about this later.) In addition, some line equipment will offer a cellular option, so it can take over when local internet fails and establish alternative communications.

Why cellular? Opto 22’s Eastburn points out that while it’s usually more expensive, its failure points are typically orthogonal to the primary ISP approach, meaning that there’s a good chance that cellular internet communications will be there when the ISP isn’t.

Keep in mind that establishing a cellular connection will not likely have the performance or reliability of a wired or fiber connection, says IDEC’s Pham. However, if a processor has a critical reliance on internet connectivity, then a redundant cellular option may indeed be worthwhile.

Another wireless option may be available as a backup, says Stellar’s Clussman. There are wireless providers in major metro areas that can install a directional antenna on a building’s side or roof. These wireless antennas could be used as backup to wired connections. Granted, the downsides of wireless usually include lower bandwidth (slower data speeds) and higher latency. If an outage is going to last a long time, the IT department could manually switch over to the wireless option.

Store and forward: Good for short term and long term

Internet outages do not always directly affect a production line, says CHL Systems’ Clemens. Many production lines are controlled by their own processors and do not need to report to a centralized location. However, that is not always the case, as some operations have a centralized location making decisions based on the data being reported. As we’ve seen, there are some devices that can still get the data to the cloud during loss of internet. Even without having the failover, they have the ability to maintain an I/O server, mirroring the tag values from the PLC/HMI locally and then passing them to the cloud the next time a connection is available.

“You want to operate under the assumption that you will lose the internet at some point,” says CRB’s Pflantz. Control systems should be designed to carry on and stay safe if higher level communications are lost. “What you are doing over that link should have some sort of DMZ or buffer so a disruption of internet communications will not instantly shut you down,” says Pflantz. Most control systems have data collection software and hardware solutions that will allow portions of the system to fail or go offline, and the data will be buffered or stored temporarily in a secondary location until things are fixed.

“You want to operate under the assumption that you will lose the internet at some point.”

– Steve Pflantz

For example, ICONICS IoT Hyper Collector is part of ICONICS IoTWorX, which is a micro-SCADA software suite installed on a third-party edge device that can be configured either via the cloud or locally, says Tom Buckley, IoT business development manager.

“We have a feature called store-and-forward on some of our edge devices, like the Emerson CPL410 and the AVENTICS Smart Pneumatics Monitor (SPM),” says Noble. If the internet connection is lost, the device will actually store the data, then forward it when service is restored. It’s also possible to store raw data, but it’s a good idea to decide how much data needs to be stored in the edge device and how often so its memory is not filled up.

“Store-and-forward is one feature we include in our products,” says Opto 22’s Eastburn. “In our case, we can provide up to one week of data protection and then automatically synchronize to central systems when connectivity is restored.”

However, if cloud-based data is used to make real-time adjustments to the process—meaning there is data flowing out to or between remote sites—then those sites need to have a redundant local process interface in order to make those adjustments for the duration of the outage.

Edge computing provides more options here as well, says Eastburn. It’s now possible to host database servers on local process controllers, in addition to remote cloud servers. Opting for an edge-first approach means that the local process can still have unrestricted access to data sources during an outage.

“For mission-critical IIoT applications, we encourage users to consider building their infrastructure around the MQTT communications protocol and Sparkplug standard because of their inherent consideration for network instability,” adds Eastburn. Originally designed for high-latency networks with intermittent communication, they offer many features that help systems cope with short-term connection loss.

“If the IIoT device is using MQTT, think about using a local ‘broker’ to store the data, as opposed to an off-site or cloud-based one,” says Stellar’s Clussman. When communication is restored, the topics can be played back if set for this mode.

Short-term vs. long-term outages

How you plan for outages will depend on your application. If the internet/cloud is used for PdM applications, then outage length is not so crucial. “We’re not controlling the process with IoT, we’re monitoring it,” says Emerson’s Noble. For a short-term outage, the messages will still be there as soon as the internet comes back. For long-term outages, the system may not be able to store all the data, but the messages will be saved. “So if the internet goes off at 2 o’clock and comes back on at 4 o’clock, you’re not really going to miss anything,” says Noble.

PdM systems should not assume the worst during short-term outages, says Stellar’s Clussman. Algorithms should be able to tolerate reasonable gaps in data. If the outage was the reason, any substitute values out-of-range that would cause unnecessary servicing work should be ignored. The alarm should be “communication lost,” not cryptic failures or serious false alarms that would call emergency services or mobilize service personnel unnecessarily.

With PLC-connected devices, short-term outages are less of a nuisance because the data can be mirrored from the PLC/HMI and then be transmitted when the internet connection is back up, says Clemens. But if a process is dependent on responding to that data, simply mirroring would not be enough in the event of a long-term outage. In that case, more time should be invested up front to develop the backup scenario with a second module (cellular) and/or a second ISP connection.

Design your controls to deal with an outage on the order of hours, says CRB’s Pflantz. Make sure your system can buffer data for, say, eight hours. That gives someone time to wake up, drive to the shop or office, get the spare part out of storage, swap it out and fire the system back up. An outage beyond a standard eight-hour day is more of a Plan B alternate solution for you to consider. As long as you are not doing critical real-time control over an internet link, you can run for a shift or so while you get a backup server in place to store more data short term.

Dealing with local outages

The most common local failures tend to be hardware or component related, followed by the much rarer media (cable/fiber) failures, says Pflantz. Software issues fall somewhere in the middle but should be fairly infrequent if controls are well designed and managed. The same is true with virus or cyberattacks, which can be rare, but bad if a system isn’t well protected.

“Other internal failures I’ve seen are electrical brownouts/phase leg loss damaging routers and servers,” says Stellar’s Clussman. Then there are the freak occurrences. For example at one facility, an arc blast during servicing of an overhead crane—an incident that should have never occurred had safety procedures been followed, and luckily caused no injury to personnel—caused cascading effects, taking out power supplies in server room equipment. The takeaway from this is that sometimes UPS (uninterruptible power supplies) and surge suppression are often considered the same thing. Unfortunately, in the case of large power surges, having spares on the shelf onsite or a nearby supplier is mandatory, says Clussman.

Make sure the installation is robust, says Stellar’s McCollum. Accidents will happen that will cause communication errors, but top-end hardware will be more reliable than bargain brands. The same goes with cables. Ethernet cables made up in the field are notorious for having loose connections that can fail over time or cause intermittent issues that are hard to trace. Make sure all cables (including fiber) are tested after being made up and before startup.

Learn more about automation technologies in food & beverage processing plants

Having spare parts and cables on hand is always recommended, adds McCollum. In conjunction with properly setup managed network switches, the point of failure can be easily identified and fixed. If the manufacturer is in a pinch, like say a conduit is hit and damages an Ethernet cable, you could have a 100-300 ft. cable on hand to connect temporarily one point to another until there’s some scheduled downtime to make repairs. It might just save a production run one day. Keep an Ethernet cable tester on hand to check cables for problems.

“Design your system around the fact that things can happen,” says Pflantz. “Plan and spend in relation to the likelihood and risk/damage potential. And use care in how you use the internet and IIoT functionality and connections in your live process and production. Using the cloud to make production decisions is fine, but limit or plan around the reliance upon real-time data flow and access. As you move up the network level, things you are doing should be less real-time critical, more supervisory in nature.”

Tips to prevent internet/network failures

This list from the experts interviewed is by no means exhaustive but offers a starting point to minimize outage risks.

- Have spares of all critical hardware on hand (e.g., routers, switches, cables, connectors, network cards).

- Back up and document router, switch, server configurations. Image server hard drives and store in a safe place offline.

- Have maps of the network available showing cable routes, router and switch locations and their IP addresses/ranges, and other key equipment.

- Know what equipment should and shouldn’t be on the network.

- Label cables coming into switch and router ports.

- Keep cables in conduits and off the floor and away from washdown areas.

- Separate the control network from the internet; secure sensitive equipment ports from the internet.

- Avoid complicated infrastructure. Eliminate single points of failure.

- Avoid using equipment with small power supply “cubes.” If they must be used, put them in an equipment cabinet.

- Monitor the network for traffic, especially that which doesn’t belong.

- Make sure power is stable, filtered and has surge suppression. Keep sensitive network equipment and cabling far away from devices generating arc flashes—such as welders and contactors. Run network cabling perpendicular to heavy power wiring—not parallel to it.

- Keep subsystems, such as video and others with heavy data usage, off plant networks—with the exception of video inspection systems on local machine networks.

- Use spare network cards in case a PC’s motherboard Ethernet port is taken out by induced voltage spikes on the wired network. A spare network card may save having to replace an entire motherboard—as long as no other damage occurred. Use fiber-optic cards and network to alleviate the problem altogether.

- An old radio engineer’s trick: Leave a 1 to 2 ft.-diameter loop in a cable to discourage lightning traveling down the wire.

For more information:

CHL Systems,

www.chlsystems.com

CRB,

www.crbusa.com

Emerson Automation Solutions,

www.emerson.com/en-us/automation-solutions

ICONICS,

www.iconics.com

IDEC Corporation,

www.idec.com

Opto 22,

www.opto22.com

Stellar,

www.stellar.net

“How to avoid the high cost of IoT network outages,” David McKay, Wave7, https://wave7.co/blog/how-to-avoid-the-high-cost-of-iot-network-outages

“Cybersecurity helps manufacturers create more secure, resilient networks,” FE, February 2019

Looking for a reprint of this article?

From high-res PDFs to custom plaques, order your copy today!