Innovation

In-home testing offers numerous benefits for product developers

In-home testing (iHUT) traces its origins to the consumer packaged goods industries and the formation of the first marketing research departments during the 1920s and 1930s. With the end of World War II in 1945, the practice of in-home usage testing spread rapidly.

Returning soldiers, new marriages, new babies and a population deprived of many consumer goods through the Great Depression and the war years fueled rapid growth in consumer goods industries after the war.

The widespread adoption of iHUTS by CPG companies led to dramatic improvements in product quality and consumer acceptance of store-bought packaged foods, beverages and household consumables. The humble iHUT received little credit for CPG growth after the war, but the importance of its contributions cannot be overstated.

iHUTs were (and are) used for a number of important purposes; the most important of these are:

- To achieve product superiority over competitive products.

- To continuously improve product acceptance as consumer tastes evolve over time.

- To monitor the potential threat levels posed by competitive products.

- To reduce costs of product formulations and/or processing methods, while maintaining product quality.

- To measure the effects of aging upon product quality (shelf-life studies).

- To monitor product quality from different factories and through different channels of distribution.

- To predict consumer acceptance of new products.

Companies committed to rigorous iHUTs and continuous product improvement can, in most instances, achieve product superiority over their competitors. This superiority, in turn, helps build brand share, magnifies the positive effects of all marketing activities (advertising, promotion, selling, etc.), and often allows the superior product to command a premium price.

In today’s short-term, highest-profit-margin world, most corporations, unfortunately, don’t use iHUTs like they did back during the golden age of consumer packaged goods. Many of the corporate leaders in iHUTs 75 years ago, if still in business today, have lost the art of in-home usage testing, or lost the budgets to do serious in-home research. IHUT shortcomings in the majority of companies create opportunities for the minority of companies that are dedicated to product superiority and to continuous product improvement. What are some of the best practices CPG companies should pursue to fully exploit the value of iHUTs?

iHUT best practices

Recommended best practices to achieve accurate and actionable consumer product testing results:

- A systems approach. The methods and procedures of iHUTs should be a standardized system, with standard operating procedures, so that every like product is tested exactly the same way, including: identical product preparation, product age, packaging and labeling of test products; identical questionnaires (of course, parts of the questionnaire must be adapted to different product categories); identical sampling plans from iHUT to iHUT; identical data preparation and tabulation methods; and similar analytical methods.

- Normative data. As products are tested over time, the goal is to build normative databases, so that successive iHUTs become more meaningful and more valuable. The normative data, or norms, continually improve a company’s ability to correctly interpret its iHUT scores.

- Same research company. Use one research company for all of your iHUTs. This is the only way you can make sure all tests are conducted in exactly the same way.

- Real environment test. If a product is typically used at home, it should be tested at home. If the product is consumed in restaurants, it should be tested in restaurants, and so on. In general, this kind of “real environment” test will always produce the most accurate results. For food products an in-home usage test is almost always most accurate and most predictive of future success.

- Relevant Universe. Sampling is a critical variable in iHUTs. For new products or low-share products, the sample should reflect, or represent, the brand-share makeup of the market. For well-established, high-share (or highly differentiated) products, the sample should contain a readable sub-sample of that product’s users and a readable cell of nonusers. If the product category is underdeveloped (e.g., a relatively new category), then the sample should include nonusers of the category, as well as users. That is, if a company’s brand share is very low, it’s important to assign more weight (or importance) to the opinions of nonusers of the brand. If brand share is very high, then what brand users think is most important.

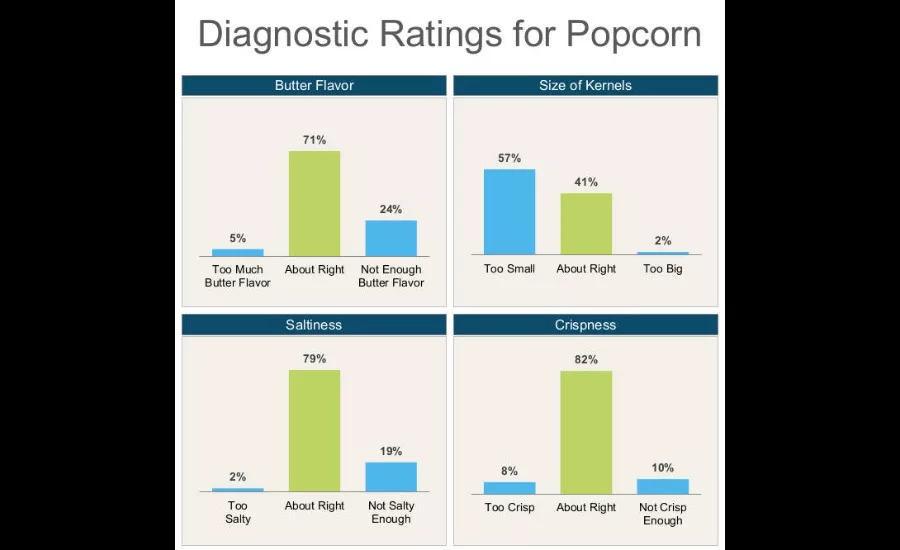

- Critical measures. Product performance and quality must be defined from the consumer’s perspective, not the manufacturer’s. What aspects of the product are truly important to consumers? These critical variables must be identified for each product category (typically, with focus groups or depth interviews) and incorporated into the standardized iHUT testing system.

- Careful and cautious. The formulation of an established product should never be changed without careful testing and evaluation of the new formulation. Once you are sure you have a better product based on iHUT testing, introduce the “better” product into a limited geographic area for a reasonable time period (several product repeat purchase cycles). Then, and only then, roll the new product out to all markets. The smaller the market share, the greater the risks that can be taken with a new formulation. The larger the market share, the more conservative one should be in introducing a new formulation.

Recommended techniques

The monadic, sequential monadic, paired-comparison and protomonadic research designs are the most widely used for product testing. Monadic and sequential monadic designs are recommended as the best methods for iHUTs, especially the monadic test.

Monadic testing

This is typically is the best method. Testing a product on its own (by itself) offers many advantages. Interaction between products (which occurs in paired-comparison tests and sequential monadics) is eliminated. The monadic test simulates real life (that’s the way we usually use products – one at a time). By focusing the respondent’s attention upon one product, the monadic test provides the most accurate and actionable diagnostic information. Additionally, the monadic design permits development of norms and action standards.

Sequential monadic designs

These are often used to reduce costs. In this design, each respondent evaluates two products (he or she uses one product and evaluates it, then uses the second product and evaluates it). The sequential monadic design works reasonably well in most instances and offers some of the same advantages as pure monadic testing.

One must be aware of what we call the “suppression effect” in sequential monadic testing, however. All the test scores will be lower in a sequential monadic design, compared to a pure monadic test. Therefore, the results from sequential monadic tests cannot be compared to results from monadic tests. Also, as in paired-comparison testing, an “interaction effect” is at work in sequential monadic designs. If one of the two products is exceptionally good, then the other product’s test scores are disproportionately lower and vice versa. Asking consumers to test two products in their homes runs the risk of miscommunication and confusion between the two products, a significant disadvantage compared to a monadic design.

Paired-comparison designs

In this approach, the consumer is asked to use two products simultaneously and determine which product is better) appeal to our common sense. It’s a wonderful design if presenting evidence to a jury because of its “face value” or “face validity.” The paired comparison can be a very sensitive testing technique (i.e., it can measure very small differences between two products). Also the paired-comparison test is often less expensive than other methods because sample sizes can be smaller in some instances.

Paired-comparison testing, however, is limited in value for a serious, ongoing product-testing program. The paired-comparison test does not tell us when both products are bad, does not lend itself to the use of normative data and is heavily influenced by the “interaction effect” (i.e., any variations in the control product will create corresponding variance in the test product’s scores). For in-home usage testing, there is the great risk that the two products will be confused by the respondent.

Protomonadic design

The definition of this term varies from researcher to researcher, but the method begins as a monadic test followed by a paired comparison. Often sequential monadic tests are also followed by a paired-comparison test. The protomonadic design yields good diagnostic data, and the paired comparison at the end can be thought of as a safety net—as added insurance that the results are correct. The protomonadic design is typically used in central-location taste testing, not in-home testing because of the complexity of execution in the home.

Monadic research designs are recommended for iHUTs because virtually all consumer products can be tested monadically, and the results are free from interaction effects and suppression effects. Since only one product is tested, there is less chance for respondent confusion and error. Some products cannot be accurately tested in a paired-comparison design. For example, a product with a very strong flavor (hot pepper sauce, alcohol, etc.) may deaden or inhibit the taste buds so that the respondent cannot accurately taste the second product in a paired-comparison test.

While most iHuts are conducted in the food, beverage and household consumables categories, the concepts and methods of iHUTs are applicable to many product categories, although the structure and mechanics of execution will vary.

For example, computer software, furniture, small appliances, large appliances, cosmetics, OTC medicines and toys can be tested in-home. Power tools, lawnmowers, trimmers, dog food, cat food and bug spray can be tested in-home. Any product used in or around the home can be tested in-home.

Ultimate advantage

The ultimate benefit of iHUTs is competitive advantage. Creating and maintaining a better product is the surest way to dominate a product category or an industry. Companies dedicated to ongoing in-home usage testing can achieve and sustain product superiority. Companies that ignore the learning and guidance from iHUTs, on the other hand, may wake up one morning to find themselves on the brink of extinction from a competitor who has built “a better mousetrap.”

Looking for a reprint of this article?

From high-res PDFs to custom plaques, order your copy today!